The Rise of the Robot Reporter was the frightening headline in the New York Times last year, when it noted that a third of content from Bloomberg News was generated by the company’s automated system ‘Cyborg’. Frightening at least for journalists, many of whom have long feared that they would end up on the scrap heap, replaced by an algorithm – a growing concern dubbed the new ‘automation anxiety’. When the British trade paper Press Gazette asked its readers this year if they saw AI robots as a threat or an opportunity, more than 1,200 voters (69%) saw them as a threat.

The Rise of the Robot Reporter was the frightening headline in the New York Times last year, when it noted that a third of content from Bloomberg News was generated by the company’s automated system ‘Cyborg’. Frightening at least for journalists, many of whom have long feared that they would end up on the scrap heap, replaced by an algorithm – a growing concern dubbed the new ‘automation anxiety’. When the British trade paper Press Gazette asked its readers this year if they saw AI robots as a threat or an opportunity, more than 1,200 voters (69%) saw them as a threat.

But there is no escaping this development. On 7 December, the London School of Economics’ think tank POLIS hosted its first festival looking at the intersection of journalism and AI. POLIS’ report last year found that of the 71 organisations in 32 countries surveyed, half used AI for newsgathering, two thirds for production and half for distribution. Here in the UK, for example, the Press Association wire service uses software to produce localised data stories at scale and speed, while the Times used AI software to create email newsletters tailored to digital subscribers’ interests.

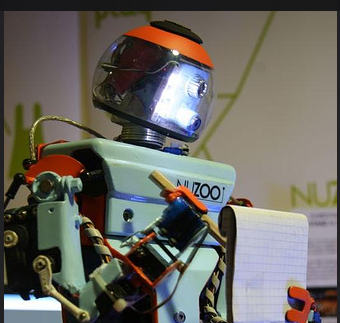

Human journalist vs R2D2 reporter

Unsurprisingly, much of the focus for journalists has been about how their working practices will be affected – will they be made redundant by the newswriting equivalent of Star War’s astromech droid R2D2? But, given AI is going to play an increasingly important role in news – and the Polis report is clear that this will happen – what about the ethical questions that this raises?

As co-investigator on the DMINR AI journalism project here at City University of London, our approach was to involve journalists from the start, so that we could design a tool that will be useful for them. We went into several major newsrooms to talk to reporters and observe how they work, the ways they use AI and their concerns around it. But we also need to ensure that our tool, and others, allow them to use AI in an ethical way.

AI and ethics

One of the first ethical issues identified in the Polis report is concern by editorial staff about whether AI is just a way to save money, but there are many others. While bias in journalism has been widely debated (see for example, the work of Michael Schudson), what about bias in algorithms? This can range from technical problems with data inputting, to algorithms reflecting all too human biases on race and gender – as exposed by the former ProPublica journalist Julia Angwin. She found that a programme, used by the US criminal justice system to predict whether defendants were likely to reoffend, was actually discriminating against black people.

Concerns around filter bubbles and AI, confirmation bias and even generating deep fakes have also been raised. Of course this is nothing new as a recent detailed piece from Columbia Journalism Review about the 1964 World’s Fair in New York makes clear.

Conversely AI has been championed as a way of producing more ethical journalism. Could it help uncover connections that otherwise would have been missed? And could the ongoing debate about problems around the biases of AI mean that newsrooms themselves have to be much more transparent and open with their audiences about what they are doing?

The human touch

Jeff Jarvis coined the term a decade ago of ‘process journalism’ rather than ‘product journalism’ – the changing culture of news reporting where journalists did not produce stories in distant newsrooms, but learned to engage and update their stories through interactions with their audiences. By being open about AI, and its use, can the declining trust in the UK’s media be restored?

It’s true that there has been more focus on the potential problems around AI rather than the upsides. This makes it even more important for researchers and developers in the field to interact with journalists from the start to ensure that these ethical issues are tackled. As one respondent to the Polis report said: “The biggest mistake I’ve seen in the past is treating the integration of tech into a social setting as a simple IT-question. In reality it’s a complex social process.”

And for those journalists who still suffer automation anxiety, there is some optimism. As Carl Gustav Linden wrote in an article for Digital Journalism in 2015, perhaps the question we should be asking is: why after decades of automation are there still so many jobs in journalism? The answer: despite 40 years of automation, journalism as a creative industry has shown resilience, and a strong capacity for adaptation and mitigation of new technology. We are still a long way off from the scenario where a journalist R2D2 replaces a human reporter….

Opinions expressed on this website are those of the authors alone and do not necessarily reflect or represent the views, policies or positions of the EJO or the organisations with which they are affiliated.

If you liked this story, you may also be interested in: News consumers ‘prepared to pay for quality and distinctiveness’ – DNR 2020

Follow us on Facebook and Twitter.

Tags: AI, Artificial Intelligence, Columbia Journalism Review, Digital Journalism, journalism ethics